TABLE OF CONTENTS

Experience the Future of Speech Recognition Today

Try Vatis now, no credit card required.

Video teams still talk about subtitles as if they’re an add-on. The viewing data says otherwise. A joint study from Verizon and Publicis Media found that up to 80% of viewers are more likely to finish a video with subtitles, and the same research found that 92% of viewers watch videos with the sound off on mobile devices. For Gen Z, subtitle use is especially common, with 80% using subtitles “most of the time” according to Kapwing’s roundup of subtitle statistics citing the Verizon and Publicis Media study.

That changes how you should think about subtitles. They aren’t just for accessibility compliance. They affect whether your message gets seen, understood, and remembered when someone is on a train, in an office, or scrolling late at night with the volume muted.

The practical part is where many teams get stuck. They know subtitles matter, but they’re less sure about file formats, timing rules, quality control, translation, and how all of that connects to engagement, accessibility, and business outcomes. That full lifecycle is where good subtitle decisions pay off.

Why Subtitles Are No Longer Optional in 2026

If you publish video, subtitles now sit in the same category as clean audio and readable design. They’re part of the base experience.

The strongest reason is simple: subtitles change completion behavior. The Verizon and Publicis Media findings above show that viewers are far more likely to finish subtitled video, and that’s not hard to understand in real life. People watch in public places, in open-plan offices, and on mobile with the sound off. If the spoken message only exists in audio, many viewers never receive it.

This also explains why teams that work on short-form video have become much more deliberate about on-screen text. If your audience encounters your content first in a muted feed, subtitles don’t just support accessibility. They help the first few seconds land. Teams working on social content often see this firsthand when they compare silent-friendly edits with audio-dependent ones, which is why guides on Instagram reel caption benefits have become so relevant to everyday publishing.

Viewing context has changed

A video used to assume attention, headphones, and a bigger screen. That assumption doesn’t hold.

Now the same clip might be watched:

- On a phone in silence: The viewer can see but not hear.

- In a shared environment: Audio may be distracting, inappropriate, or unavailable.

- By someone processing spoken language differently: Reading along may be easier than listening alone.

- Across language boundaries: A subtitle track can make the content usable without changing the original audio.

Practical rule: If your message matters, don’t force viewers to rely on sound to understand it.

Subtitles affect more than accessibility

A lot of confusion comes from seeing subtitles as a “nice to have” for one audience segment. In practice, they support several goals at once:

| Goal | Why subtitles matter |

|---|---|

| Engagement | They help viewers follow the message when audio is off or unclear. |

| Accessibility | They make spoken content more usable for people who need text support. |

| Reach | They help content travel across languages and markets. |

| Operational quality | They turn spoken media into a reviewable, editable asset. |

That’s why subtitle decisions shouldn’t be left until the final export. They belong in production planning, editorial review, localization, and compliance.

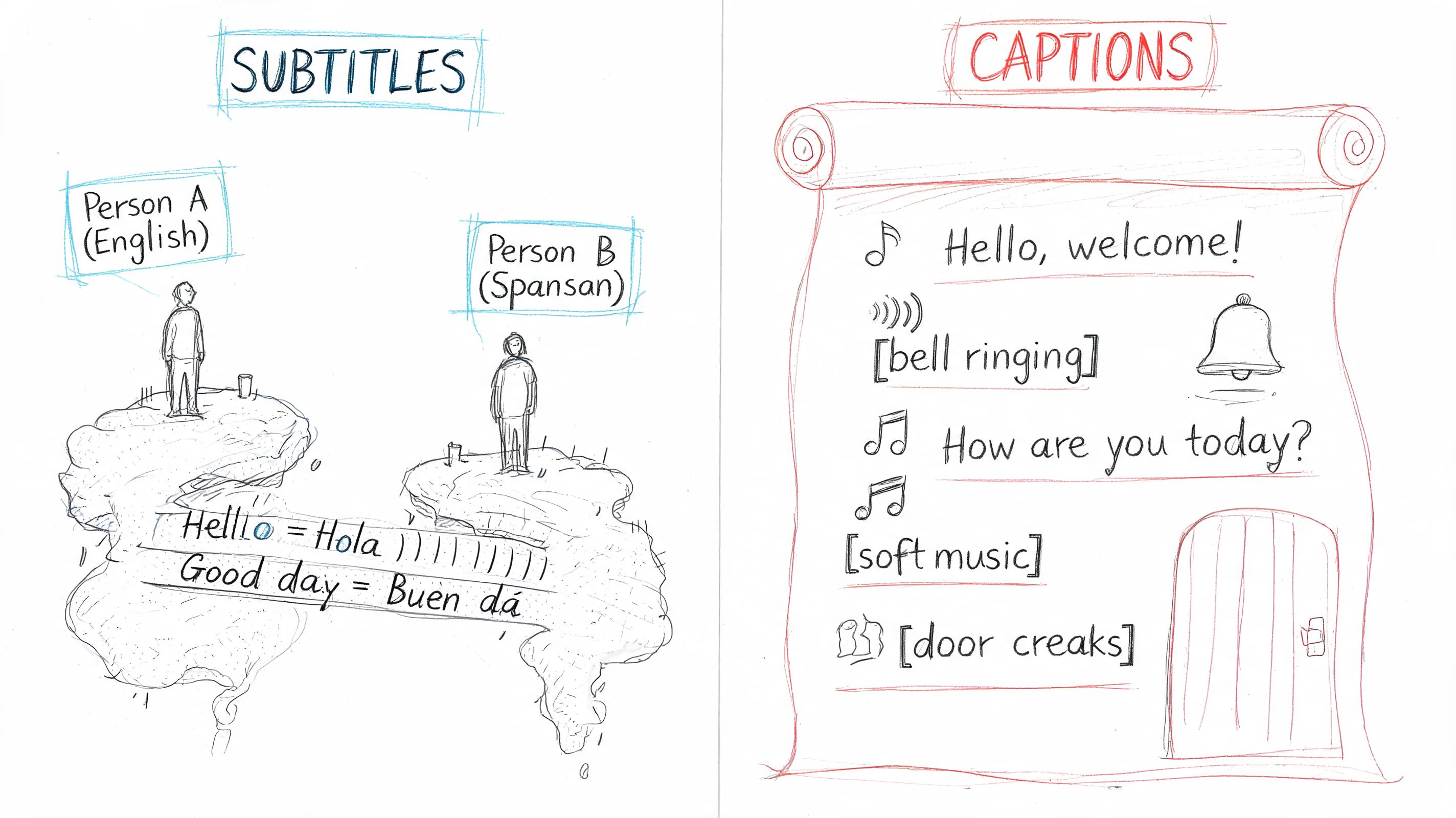

Subtitles vs Captions The Key Differences

People often use the terms interchangeably. In day-to-day conversation, that’s understandable. In production, it causes avoidable mistakes.

The simplest way to separate them is this: subtitles usually represent spoken dialogue, often for viewers who can hear the audio but need a language translation. Captions include dialogue plus important sound information, so a viewer can understand not only what was said, but also what was heard.

Reading support for a film features distinct options. Subtitles are closer to a translated script. Captions are closer to a script with stage directions and sound notes included.

A working mental model

Take a scene where someone says, “Get down,” while a siren blares and glass breaks.

A subtitle might show only:

- Get down.

A caption might show:

- [siren blaring]

- Get down!

- [glass shatters]

That difference matters. If the sound carries meaning, captions preserve it.

The main subtitle and caption types

In real projects, you’ll usually hear these terms:

- Standard subtitles: These focus on dialogue, most often for translation. A French film with English subtitles is the classic example.

- Closed captions (CC): These can usually be turned on or off by the viewer. They include dialogue and meaningful sound cues.

- Open captions: These are burned into the video image. The viewer can’t disable them.

- SDH subtitles: Subtitles for the Deaf and Hard-of-Hearing sit between translation-focused subtitles and accessibility-focused captions. They include additional sound and speaker information needed for comprehension.

Here’s a quick comparison:

| Type | What it includes | Viewer can turn off? | Common use |

|---|---|---|---|

| Standard subtitles | Spoken dialogue | Usually yes | Translation |

| Closed captions | Dialogue plus sound cues | Yes | Accessibility |

| Open captions | Whatever is embedded in the video | No | Social clips, fixed-display environments |

| SDH | Dialogue, speaker labels, key sound context | Usually yes | Accessibility with added context |

Why teams get this wrong

The mistake usually happens at handoff. Someone says “add subtitles,” but what's needed is an accessibility asset with sound descriptions. Or they ask for captions, but what they really need is translation into another language.

That confusion gets more expensive as distribution expands. The global film video subtitles market was valued at approximately USD 8.73 billion in 2024 and is projected to reach USD 13.83 billion by 2033, while 61% of content localizers translate their subtitles to reach international audiences according to CaptioningStar’s review of subtitle globalization trends. Once a team is publishing to multiple regions, these definitions stop being academic. They determine workflow, budget, review needs, and deliverables.

A short visual explainer can help align internal teams before production starts:

Ask for the deliverable by purpose, not label. “English translation subtitles,” “closed captions with sound cues,” or “burned-in open captions for social” is much clearer than just saying “captions.”

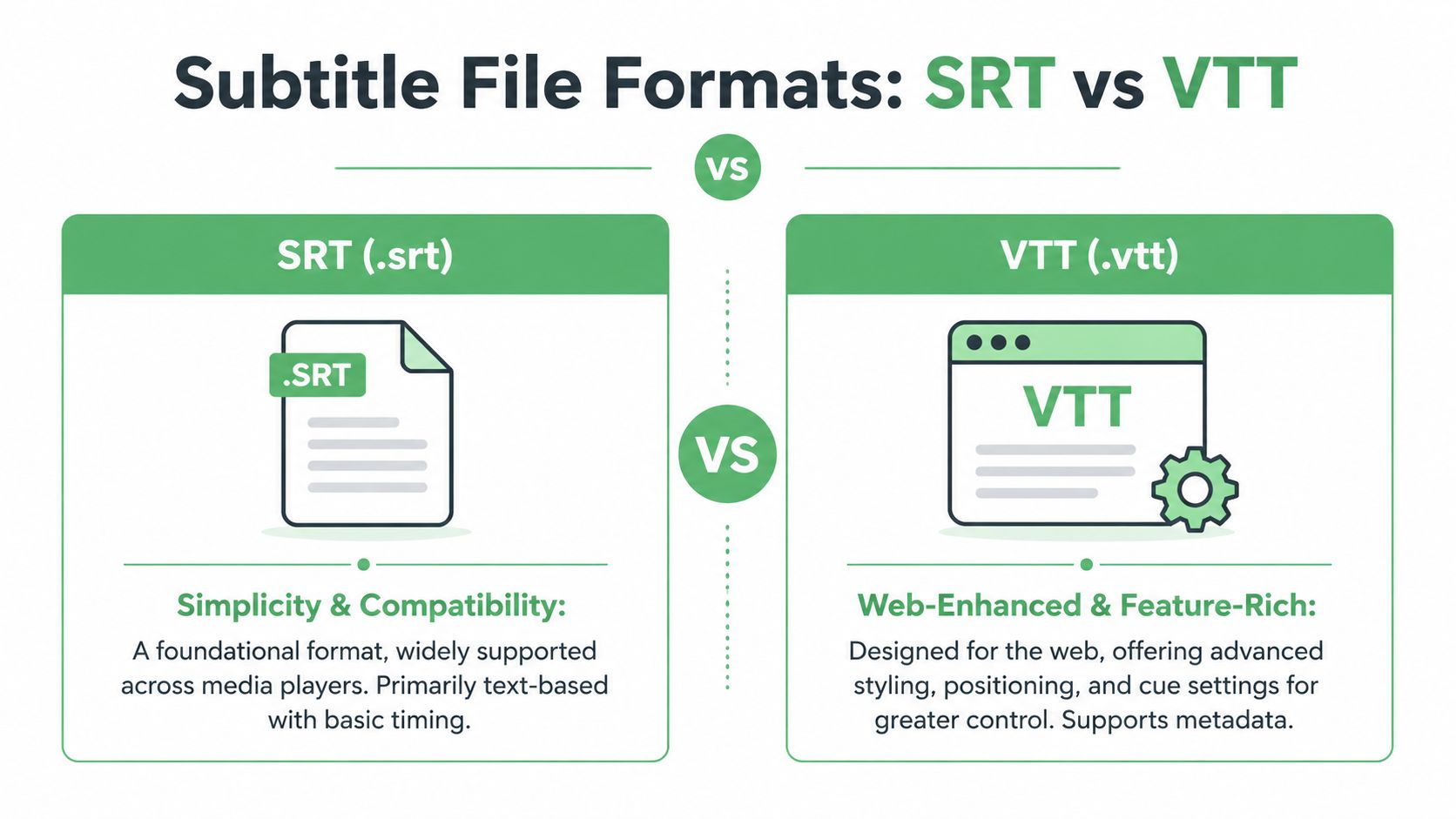

Decoding Subtitle File Formats SRT vs VTT

Once the text is written and timed, it has to live in a file format your platform understands. Typically, that means choosing between SRT and VTT.

Both store subtitle cues and timestamps. The main difference is how much control you need after upload.

What SRT does well

SRT (.srt) is the plainest subtitle format widely encountered. It’s simple, widely accepted, and easy to inspect in a text editor.

A basic SRT cue looks like this:

100:00:01,000 --> 00:00:03,500Welcome to the product demo.200:00:04,000 --> 00:00:06,000Let's start with the dashboard.SRT is a good choice when you want:

- Broad compatibility: Many video platforms and media players accept it without fuss.

- Simple editing: Editors, producers, and QA reviewers can open it directly.

- Straight export workflows: It works well when the goal is “get this subtitle file uploaded.”

Its limitation is that it doesn’t carry much extra information. Styling and positioning are basic or platform-dependent.

Where VTT becomes useful

VTT (.vtt) was designed for web video. It keeps the same basic idea as SRT but adds support for more web-oriented control, including metadata and richer cue settings.

A simple VTT file looks like this:

WEBVTT00:00:01.000 --> 00:00:03.500Welcome to the product demo.00:00:04.000 --> 00:00:06.000Let's start with the dashboard.The file feels familiar, but it’s more flexible in browser-based environments. If you need cues that behave more precisely in a web player, VTT usually gives you more room to work.

For web delivery, VTT often makes life easier because it was built with browser playback in mind.

Side-by-side decision guide

| Question | Choose SRT when | Choose VTT when |

|---|---|---|

| Where will it play? | You need broad platform support | You’re targeting HTML5 web players |

| How much styling control matters? | Minimal styling is fine | You need richer control over display behavior |

| Who will edit the file? | Non-technical reviewers may open it directly | Your team works comfortably with web media assets |

| Will the subtitles live mostly on the web? | Not necessarily | Yes |

A practical example

Say a training team exports a webinar for YouTube, internal review, and a website resource library.

- For the upload-heavy path, SRT is often the easiest shared format.

- For the custom website player, VTT may be the better fit because web players often handle it more gracefully.

- If the same transcript needs timestamp cleanup before publishing, a guide on how video timestamps work in editing and review can help teams avoid preventable sync errors before export.

The mistake to avoid

Don’t treat file format as a last-second technical detail. It affects review, player behavior, styling options, and troubleshooting later.

If your subtitles are likely to move across platforms, keep a clean master transcript and export both formats when needed. That gives editors, developers, and platform managers room to work without rebuilding the subtitle track from scratch.

Best Practices for Readability and Timing

Bad subtitles are distracting. Good subtitles feel invisible. The viewer follows the story and barely notices the mechanics.

That effect comes from a set of small technical decisions. They aren’t arbitrary style rules. They exist because people need time to read, process, and look back at the image.

Professional subtitling standards mandate a maximum of 47 characters per line and a reading speed of 12 to 15 characters per second, and timing discrepancies over 100ms can reduce viewer comprehension by up to 30% according to Alpha CRC’s guide to closed captioning and subtitling.

Keep each subtitle readable

The first job is restraint. Many teams try to fit too much speech into each cue because they don’t want to “lose” any wording. That usually makes the result harder to read.

A useful baseline:

- Limit line length: If a line stretches too wide, the eye travels too far and returns too slowly.

- Use no more than two lines: Dense subtitle blocks compete with the image.

- Respect reading speed: A subtitle can be perfectly transcribed and still unreadable if it flashes by too quickly.

For example, this is hard to read because it’s overpacked:

- We need to discuss the onboarding workflow because legal flagged the current process and product wants revisions before next week’s launch.

A better version breaks the thought into manageable units:

- We need to discuss the onboarding workflow.

- Legal flagged the current process.

- Product wants revisions before next week’s launch.

Break lines by meaning, not by chance

Subtitle line breaks shouldn’t feel random. They should follow natural language units.

Good segmentation:

- Keeps phrases together

- Avoids splitting names from titles

- Preserves one idea per cue when possible

Less effective:

- We need to approve the

- final legal language today.

Better:

- We need to approve

- the final legal language today.

That second version matches how people naturally parse the sentence.

Editing heuristic: If a subtitle makes sense only after the second line appears, rewrite the break.

Sync matters more than many teams expect

Subtitles that arrive early spoil dialogue rhythm. Subtitles that arrive late make viewers work harder than they should.

The common reaction is, “It’s only slightly off.” But viewers notice timing drift quickly, especially in interviews, training videos, and scenes with visible mouth movement. Once sync feels unstable, trust in the whole asset drops.

A practical timing checklist

Use this as a review pass before publishing:

Check the in-time

The subtitle should appear when the spoken phrase begins, not long before it.Check the out-time

Leave the text on screen long enough to finish reading it, but not so long that it lingers into the next idea.Watch transitions

Quick back-to-back cues need careful spacing so the viewer can register the change.Review difficult audio

Overlapping speakers, accents, and fast exchanges often need manual adjustment.Test with sound low or off

This reveals whether the subtitle track can carry the message on its own.

Timing and wording are connected

A lot of new editors think timing and text are separate problems. They aren’t. If dialogue is too fast to subtitle cleanly, the answer may be to condense wording while preserving meaning.

For instance, spoken language often contains repetitions, filler, and false starts. A subtitle doesn’t always need all of them. It needs the meaning the viewer must understand.

That’s especially important in documentary, legal, medical, and newsroom work. In those environments, editors need to preserve meaning carefully while still making the text readable. Precision matters, but legibility does too.

A short quality-control table

| Check | What to look for | Why it matters |

|---|---|---|

| Line length | No overlong lines | Reduces eye strain and screen clutter |

| Reading speed | Text can be read comfortably | Prevents viewers from falling behind |

| Segmentation | Breaks follow sentence meaning | Improves comprehension |

| Sync | Text matches speech closely | Keeps viewing natural and trustworthy |

| Consistency | Similar choices across the full video | Makes the track feel professional |

Good subtitle timing is craft work. The viewer should never have to think, “These subtitles are hard to follow.” They should just keep watching.

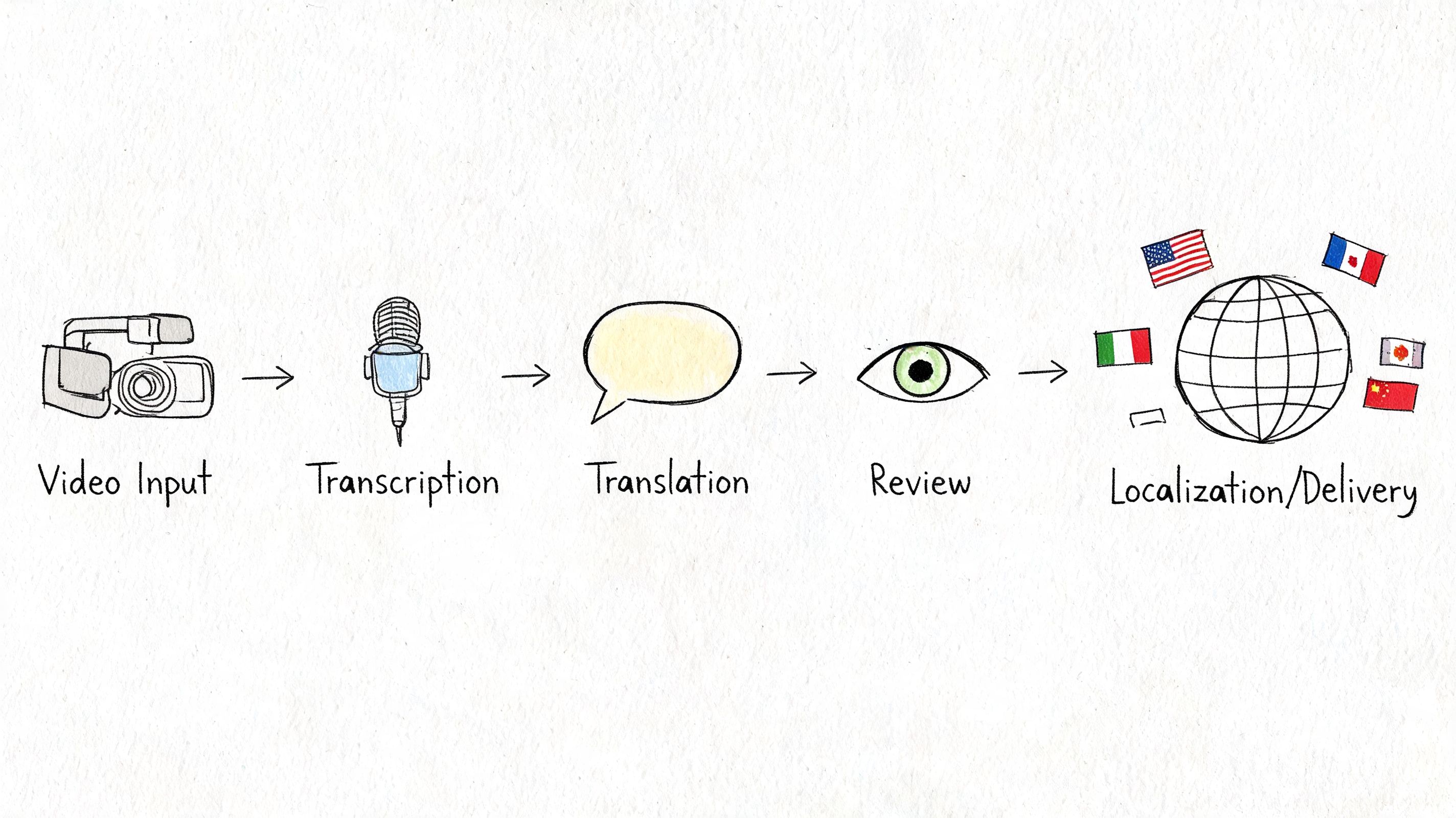

The Modern Subtitle Creation and Localization Workflow

Most subtitle problems don’t begin in the subtitle editor. They begin upstream, when teams treat subtitles as a single task instead of a workflow.

In practice, subtitle creation has several linked stages. If one stage is rushed, the next one gets harder. A weak transcript creates bad timing. Bad timing creates painful QA. Poor QA creates accessibility and localization problems later.

Stage one starts with transcription

Everything begins with a faithful text version of the audio. If names, terms, or speaker turns are wrong here, those errors spread into every later output.

A manual workflow usually means someone listens, types, rewinds, and corrects. That can work for short, high-stakes material, but it slows down quickly when a team handles large media volumes. An AI-assisted workflow speeds up first-pass transcription, then lets an editor review terminology, speaker labels, and edge cases.

Timing and segmentation shape usability

Once the words exist, they have to be turned into readable subtitle cues. That means deciding:

- where each cue starts and ends

- how much text appears at once

- where lines break

- how to handle fast or overlapping speech

Many “accurate transcript” projects fail as subtitle projects. A transcript can be correct and still make poor subtitles if it isn’t segmented for reading.

Quality control is its own discipline

Reviewers often focus on wording and miss technical quality. Subtitle QC also includes sync, reading speed, formatting, and consistency.

That matters because different stakeholders notice different failures. An accessibility reviewer may flag missing sound context. A broadcaster may care about timing precision. A legal team may focus on wording accuracy. A product team may care about whether the subtitles render correctly in the player.

Subtitle QA works best when editors review with the actual playback environment in mind, not just the text file.

Localization changes more than language

Translation is not a find-and-replace operation. It changes text length, cultural references, line breaks, and timing pressure.

A short English line may expand in another language and no longer fit cleanly in the same cue. That means localized subtitles often need retiming and resegmentation, not just translated text dropped into existing timestamps. Teams handling multilingual projects often run into this quickly when moving from one-language publishing to cross-border distribution. That’s where workflows tied to Spanish-to-English translation and multilingual review become operational, not optional.

Manual versus AI-assisted workflows

Here’s the clearest way to compare them:

| Workflow step | Manual-heavy approach | AI-assisted approach |

|---|---|---|

| Transcription | Slow but controllable | Faster first draft with editor review |

| Timing | Done cue by cue by hand | Draft timing can be generated, then refined |

| QC | Reviewer catches both text and technical issues | Reviewer focuses more on exceptions and edge cases |

| Localization | Hard to scale across many assets | Easier to replicate across volume, with human checks |

The most effective setups usually combine both. AI handles repetitive first-pass work. Humans handle quality decisions, sensitive content, terminology, and final approval.

That hybrid model is especially useful in media, healthcare, legal, education, and support environments, where subtitles need to be both scalable and dependable.

Using Vatis Tech for Accurate Subtitles at Scale

The hardest subtitle problem for many teams isn’t generating text. It’s generating text they can trust enough to publish after review.

That matters because silent viewing and accessibility needs have pushed more organizations to add subtitles to more content, but quality still breaks down in practice. 85% of Facebook videos are watched without sound, while auto-generated captions often reach only 60% to 80% accuracy, according to TestParty’s accessibility statistics roundup. For teams publishing training, news, support, legal, or healthcare content, that gap creates real review work.

A typical workflow inside Vatis Tech starts with uploading audio or video, generating a transcript, reviewing the text in an editor, adjusting subtitle timing, and exporting to formats such as SRT or VTT. The platform description provided by the publisher also notes support for 98%+ accuracy, editable transcripts, timestamps, speaker diarization, multilingual output, API access, and security features including GDPR alignment and ISO 27001 certification.

A realistic production scenario

Say a compliance team needs subtitles for a customer education video.

The source file includes multiple speakers, product terms, and a few sections where the speaker talks quickly. The team’s job isn’t just to “add captions.” They need a subtitle track that viewers can follow, that an internal reviewer can verify, and that can be exported cleanly for the company site and video host.

A practical sequence looks like this:

Upload the media

The team starts with a video or audio file and generates a first transcript.Review terminology

Product names, legal phrases, and speaker changes usually need a human pass.Adjust subtitle cues

The editor checks line length, breaks long thoughts into shorter cues, and aligns the text to speech.Export for distribution

The finished track is saved in the format required by the destination platform.

Why editor control still matters

Even with strong speech recognition, subtitle publishing still needs review. The most common trouble spots are predictable:

- Domain terminology: Medical, legal, or technical vocabulary can be misheard.

- Speaker overlap: Two people talking at once creates messy text segmentation.

- Fast delivery: Spoken language often needs subtitle condensation.

- Noise and audio quality: Background sound still affects first-pass output.

That’s why an integrated editor matters more than raw transcription alone. Teams need to correct wording, inspect timestamps, and make subtitle-specific decisions in one place rather than moving between disconnected tools.

API use case for developers

For product and engineering teams, subtitles are often part of a larger workflow. A support platform may need transcripts for uploaded calls. A newsroom may need subtitles attached to published clips. A media archive may want searchable transcripts plus subtitle exports.

At a conceptual level, the flow looks like this:

// Conceptual example onlyconst job = await transcribeVideo({file: "training-video.mp4",output: ["transcript", "srt", "vtt"],language: "en"});// Review transcript and subtitle timing in your app or editor// Then publish the exported subtitle file with the video assetThe point isn’t the syntax. It’s the architecture. Developers can treat subtitles as structured output from the same speech pipeline that also powers transcripts, timestamps, speaker separation, summaries, or redaction workflows.

High subtitle quality usually comes from a review loop, not from one-click generation alone.

Where this fits best

This kind of workflow is especially useful when teams need both volume and control:

| Team | Subtitle need |

|---|---|

| Broadcasters and newsrooms | Fast turnaround with editor review |

| Healthcare teams | Careful wording and compliance-sensitive handling |

| Legal teams | Verifiable spoken content with precise review |

| Customer education teams | Clear, readable subtitles across many videos |

| Developers | Programmatic subtitle generation inside apps and platforms |

The key takeaway is practical. Subtitle tools shouldn’t be judged only by whether they generate text. They should be judged by whether your team can review, correct, export, and publish reliable subtitles without rebuilding the workflow around the tool.

How to Embed and Troubleshoot Your Subtitles

Creating subtitles is only half the job. The other half is making sure they display properly where people watch.

A clean subtitle file can still fail at upload, render incorrectly, or drift out of sync during playback. Technical subtitle violations account for 45% of viewer complaints, and QC checks commonly verify reading speed, character limits, and 2 to 4 frame gaps between cues according to VideoTap’s subtitle QC best practices. That’s why deployment deserves as much attention as creation.

Embedding subtitles on video platforms

Most mainstream platforms let you upload subtitle files directly through the video settings interface. The exact menu names vary, but the workflow is usually similar:

Open the video settings

Look for subtitles, captions, or language tracks.Choose the subtitle language

Label it clearly. This matters later if you add multiple languages.Upload the subtitle file

Use the format your platform accepts, usually SRT and often VTT.Preview before publishing

Don’t trust a successful upload message on its own. Watch the file in the player.Check mobile playback

Subtitle display can differ between desktop and mobile players.

For YouTube, Vimeo, and similar hosts, the review step matters most. A file can upload successfully and still contain line breaks, timing, or encoding issues that only show up in playback.

Embedding subtitles with HTML5 video

If you’re publishing video on your own site, subtitles are commonly attached with a track element inside the video player.

A simplified example:

<video controls width="100%"><source src="demo-video.mp4" type="video/mp4"><tracksrc="subtitles-en.vtt"kind="subtitles"srclang="en"label="English"></video>A few practical notes:

- VTT is commonly used for web players

- Language labels should match the actual track

- Default behavior depends on player configuration

- Always test in the browsers your audience uses

Common problems and fixes

Subtitles show strange characters

If apostrophes, accented letters, or non-Latin text render as broken symbols, the problem is often character encoding.

Fixes:

- Save the file in UTF-8: This is the safest baseline for multilingual subtitle files.

- Re-export from the editor: Manual text-editor saves sometimes introduce problems.

- Test with a known-good player: This helps confirm whether the issue is the file or the platform.

Subtitles drift over time

A subtitle track may start correctly, then slowly move out of sync in a longer video.

Common causes include export mismatches, frame rate issues, or a timing offset introduced during editing.

Try this:

- Check whether the source video changed after subtitle export

- Confirm the subtitle file matches the final cut

- Use a subtitle editor to shift timing globally

- If drift increases gradually, inspect frame rate assumptions

Upload is rejected

Sometimes the platform refuses the file entirely.

Check:

- File extension: Make sure SRT is really .srt and VTT is really .vtt

- Timestamp syntax: One malformed cue can break the full upload

- Extra formatting artifacts: Copy-paste from rich text editors can introduce hidden characters

- Header requirements: VTT files need the expected opening format

The subtitles feel too fast even though they’re “correct”

This is a classic QC issue. The words may match the audio, but the subtitle track still overwhelms the viewer.

Review these points:

- Reading speed

- Line length

- Cue spacing

- Segmentation by meaning

A quick pre-publish checklist

| Check | What to confirm |

|---|---|

| File format | The destination platform supports your export type |

| Encoding | Special characters display correctly |

| Sync | Early, middle, and late sections all align |

| Readability | Cues are comfortable to read in real playback |

| Language label | The track is named correctly for users |

The final test is simple. Watch the video once as a viewer, not as the editor. Turn sound low or off. If the subtitles carry the message cleanly without pulling attention away from the content, they’re ready.

If your team needs a practical way to turn audio and video into editable transcripts and subtitle files, Vatis Tech offers speech-to-text, subtitle export, multilingual workflows, and API access that can fit publishing, compliance, and product use cases.